Can robots learn to drive like humans? Using inverse reinforcement learning to imitate human driving in autonomous vehicles

Dr Kevin Minors, Dr Minh Le Kieu, Prof Alison Heppenstall - University of Leeds, and Dr Ed Manley - University College London

Exploring the effectiveness of inverse reinforcement learning as a method to imitate human driving in autonomous vehicles using a novel dataset.

Introduction

Autonomous vehicles are growing ever more popular as research and technology continue to advance in the transportation field. One key concern for autonomous vehicles is safety. This can be addressed by creating autonomous vehicles that, at the very least, drive similarly to humans. Once this is achieved, a variety of machine learning techniques can be used to improve the driving capability of autonomous vehicles to superhuman levels.

Project aims

One way for autonomous vehicles to learn to drive like humans is through inverse reinforcement learning. The aim of this project is to apply inverse reinforcement learning to a novel dataset of human driving in order to create a driving policy that would allow an autonomous vehicle to mimic the driving behaviour observed in humans.

Explaining the science

In standard reinforcement learning, theoretical agents learn by interacting through an environment through states, actions, and rewards. In a given state, the agent will take an action and get an associated reward. Over time, the agent will learn which actions to take in each state in order to maximise the total reward received.

Inverse reinforcement learning is the converse of this. Agents are given a reward function and then, through trial and error, they learn what policy would maximise the reward received from the reward function.

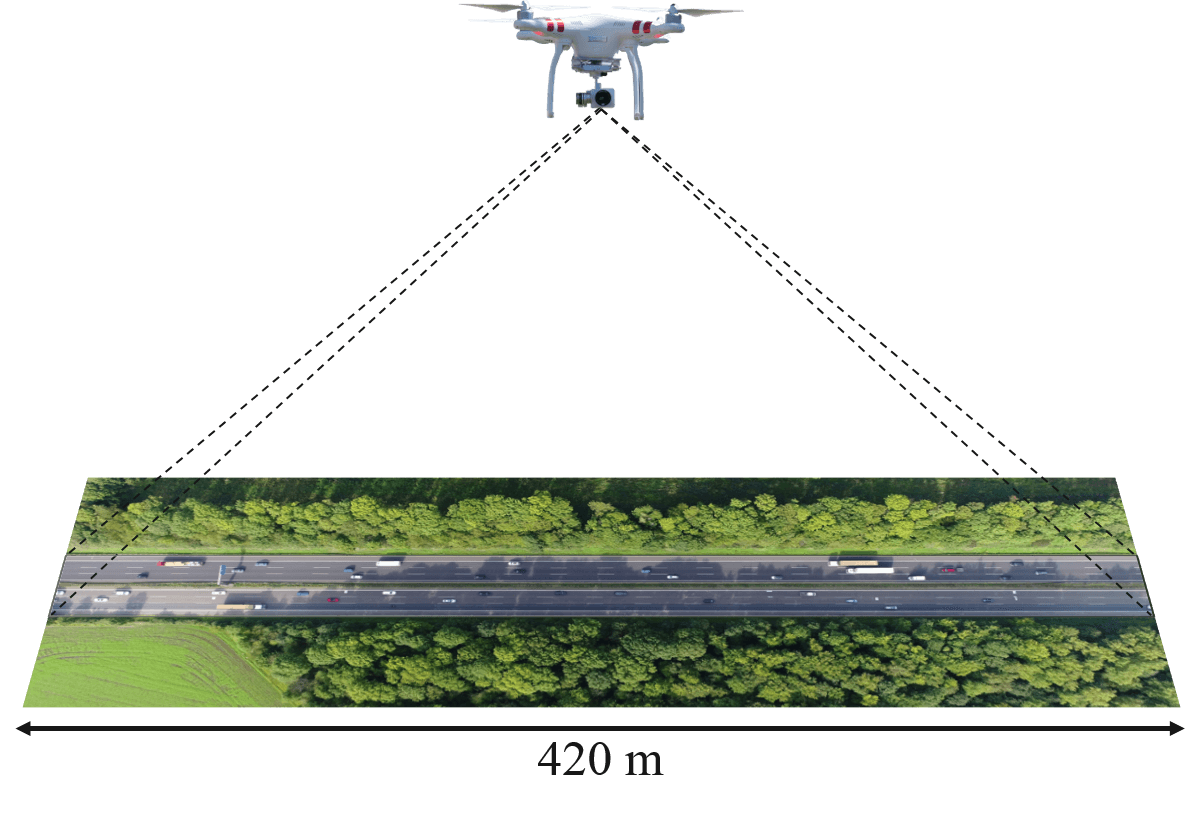

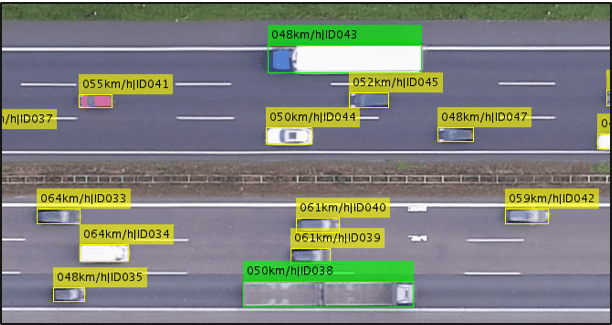

In the context of this project, we have a novel dataset of human driving behaviour. Drones were used to record video of vehicles driving on a motorway. Object detection was then applied to the video footage to extract frame-by-frame details, including the vehicle locations, speeds, accelerations, distance to other vehicles etc. From this data, we can create a driving policy for an autonomous vehicle to mimic the human driving using inverse reinforcement learning.

Results

We have found that it is possible to use inverse reinforcement learning to create driving policies that imitate observed human driving behaviour. However, some driving trajectories are harder to mimic than others as it is difficult to capture the random behaviour of other drivers in a single reward function and policy. More consideration is required for these more stochastic situations.

Insights

- Inverse reinforcement learning is a practical method of training autonomous vehicles

- Randomness in human driving can cause complications for the training algorithms

Applications

Inverse reinforcement learning will allow autonomous vehicles to mimic human driving behaviour. This will give a foundation for their driving capabilities. After this, other machine learning techniques can be used to improve these standards to superhuman levels. Other applications can be found in remote helicopter control, chat bots, and robotics.

Funders / Partners

The project has been funded through the Alan Turing Institute and LIDA. This is a collaboration with Ed Manley at UCL.

Research theme

- Inverse Reinforcement Learning

- Autonomous Vehicles

This project was undertaken as part of the LIDA Data Scientist Internship Programme.